Goal of the prediction model in video coding is to reduce redundancy by forming a prediction of the data and subtracting this prediction from the current data. The prediction may be formed from

- Previously coded frames (a temporal prediction)

- Previously coded image samples in the same frame (a spatial prediction).

The output of the prediction model is a set of residual. More accurate the prediction process, the less energy is contained in the residual. The residual is encoded and sent to the decoder. A decoder re-creates the same prediction so that it can add the decoded residual and reconstruct the current frame. For a decoder to create an identical prediction, it is essential that the encoder forms the prediction using only data available to the decoder. So data used by encoder to generate the prediction must be been coded and transmitted.

Temporal prediction

The predicted frame is created from one or more past or future frames known as reference frames. The accuracy of the prediction can be improved by compensating for motion between the reference frame(s) and the current frame.

The simplest method of temporal prediction is to use the previous frame as the predictor for the current frame. The problem with this simple prediction is that a lot

of energy remains in the residual frame. This implies that there is still a significant amount of information to compress after temporal prediction.

Changes due to motion

Causes of changes between video frames include motion, uncovered regions and lighting changes. Types of motion include

- Rigid object motion, for example a moving car

- Deformable object motion, for example a person speaking, and camera motion such as panning, tilt, zoom and rotation.

- An uncovered region may be a portion of the scene background uncovered by a moving object.

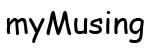

With the exception of uncovered regions and lighting changes, other differences correspond to pixel movements between frames. It is possible to estimate the trajectory of each pixel between successive video frames, producing a field of pixel trajectories known as the optical flow. Following figure shows the optical flow field for the frames. The complete field contains a flow vector for every pixel position.

If the optical flow field is known, it is possible to form an accurate prediction of most of the pixels of the current frame by moving each pixel from the reference frame

along its optical flow vector.

Block-based motion estimation and compensation

Widely used method of motion compensation is to compensate for movement of rectangular sections or blocks of the current frame. The following procedure is carried

out for each block of MxN samples in the current frame:

- Search an area in the reference frame, a past or future frame, to find a similar MxN-sample region. This search may be carried out by comparing the MxN block in the current frame with some or all of the possible MxN regions in a search area. A search region is a region centred on the current block position, and finding the region that gives the best match. A popular matching criterion is the energy in the residual formed by subtracting the candidate region from the current MxN block. The candidate region that minimises the residual energy is chosen as the best match. This process of finding the best match is known as Motion Estimation.

- The chosen candidate region becomes the predictor for the current MxN block (a motion compensated prediction) and is subtracted from the current block to form a residual MxN block.

- The residual block is encoded and transmitted and the offset between the current block and the position of the candidate region (motion vector) is also transmitted.

The decoder uses the received motion vector to re-create the predictor region. It decodes the residual block, adds it to the predictor and reconstructs a version of the original block.

Motion compensated prediction of a macroblock

The macroblock, corresponding to a 16 × 16-pixel region of a frame, is the basic unit for motion compensated prediction in a number of important visual coding standards including H.264. Motion estimation of a macroblock involves finding a 16 × 16-sample region in a reference frame that closely matches the current macroblock. The reference frame is a previously encoded frame from the sequence and may be before or after the current frame in display order.

Motion compensation block size

The energy in the residual is reduced by motion compensating each 16 × 16 macroblock. Motion compensating each 8 × 8 block instead of each 16 × 16 macroblock

reduces the residual energy further and motion compensating each 4 × 4 block gives the smallest residual energy of all. These examples show that smaller motion compensation block sizes can produce better motion compensation results. However, a smaller block size leads to increased complexity, with more search operations to be carried out, and an increase in the number of motion vectors that need to be transmitted.

Sub-pixel motion compensation

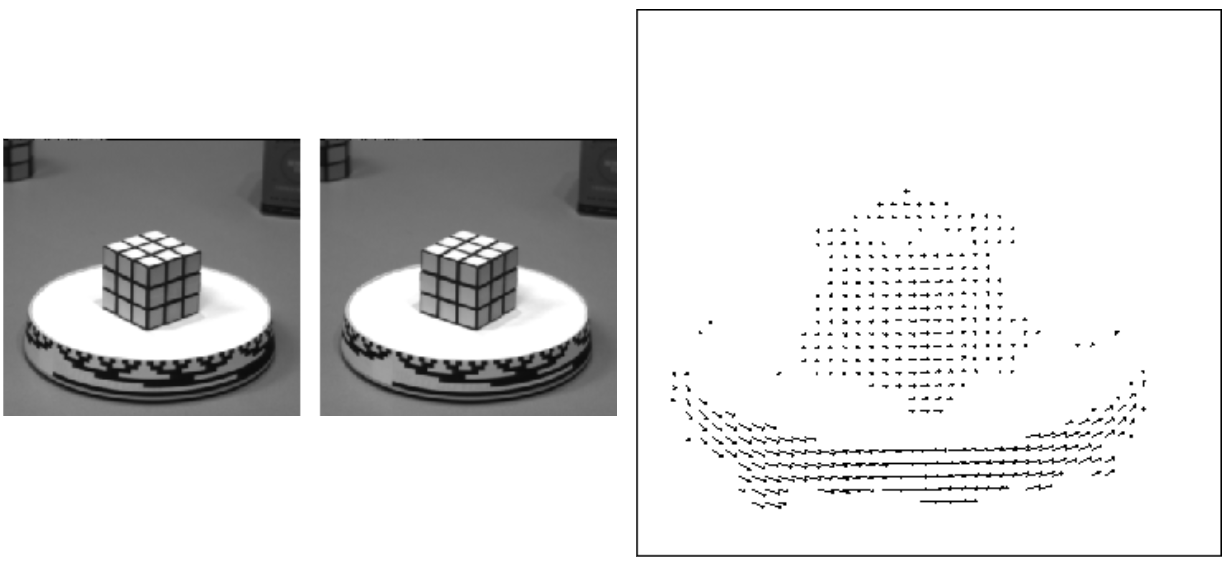

In some cases, predicting from interpolated sample positions in the reference frame may form a better motion compensated prediction. Sub-pixel motion estimation and compensation involves searching subpixel interpolated positions as well as integer-pixel positions and choosing the position that gives the best match and minimizes the residual energy. Below figure shows the concept of quarter-pixel motion estimation. In the first stage, motion estimation finds the best match on the integer pixel grid (circles). The encoder searches the half-pixel positions immediately next to this best match (squares) to see whether the match can be improved and if required, the quarter-pixel positions next to the best half-pixel position (triangles) are then searched.

Spatial (Intra) Prediction

The prediction for the current block of image samples is created from previously-coded samples in the same frame. Assuming that the blocks of image samples are coded in raster-scan order, the upper/left shaded blocks are available for intra prediction. These blocks have already been coded and placed in the output bitstream. When the decoder processes the current block, the shaded upper/left blocks are already decoded and can be used to re-create the prediction.